AFTER BLETCHLEY: NOW BUILD THE BEST AI TOOLS

AFTER BLETCHLEY: HOW TO NOW BUILD THE BEST AI TOOLS

AI investor and entrepreneur James Dancer (Keble, 1994) asks what next, following the London AI Summit

Published: 14 November 2023

Author: James Dancer

Artificial intelligence will define our societies and economies, to a greater degree than any single human technology since the printing press. As for the printing press and the computer, and every human tool since the hand axe, this new technology has the potential for both good and harm. If we want to ensure the greatest good, our total focus should now be on building the best possible AI tools, for the greatest possible good, with the best of our talents.

This month, Bletchley Park was the venue for the global summit on AI safety, at which the UK led major powers to declare the potential of AI ‘to transform and enhance human wellbeing, peace and prosperity.’ While the focus of the world has been on Bletchley, and on the latest developments in large language models, that focus is now shifting. How do we build AI which works for the greatest benefit? How can we design new drugs for a healthier old age, or new materials to accelerate the energy transition? How do we bring these advances to traditional and complex industries, which are far harder to digitise than social media, search, or marketing? What systems do we need to use the right data, and the right models, for the right purpose?

Work at the cutting edge of applied AI is shifting its focus towards these challenges. The most exciting developments are being driven by world class research, working on unexploited data sets, and translating the new insights into large scale impact. The most public example has been the incredible work on protein structures by DeepMind – creating a database of over 200 million potential proteins, promising a revolution in work on new medicines.

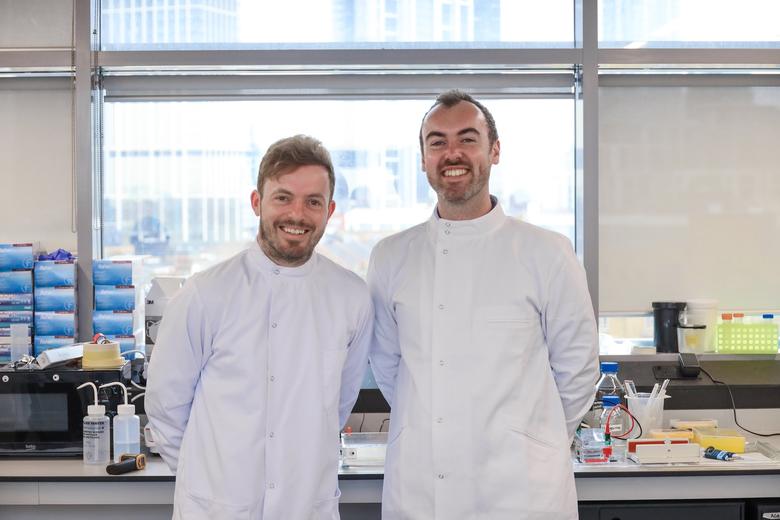

Similar work is underway, often quietly, at universities like Oxford and Stanford, in large-scale AI ventures, and with their major corporate partners. At Oxford, researchers are working on how to identify new drugs at a fraction of the time and cost, in partnership with major pharmaceutical groups – mirroring the successful collaboration on a coronavirus vaccine. They’re identifying new ways to address neurological conditions, using the UK’s world-class data on genetics. They’re designing AI systems to help doctors in intensive care units to spot which patients need to be admitted more quickly, so that care doesn’t come too late. They’re identifying new materials, at greater speed, to replace materials like silicon and rare earth elements, making it easier and cheaper to fuel the energy transition. They’re using deep learning in engineering processes, helping to design new systems and components in a fraction of the time it took previously. And they’re building tools to advise lawyers, predict wildfires, spot fakes and misinformation, and even to monitor and ensure the safety of AI systems themselves. They’re also working on fundamental approaches, searching for the next advance in deep learning.

Oxford alumni are also active in taking these new approaches to global scale, in new and established ventures – in large AI firms, in the largest tech groups, in major corporations, and hundreds of start-ups. Oxford alumni were in the teams which developed the ‘transformer’ approach on which large language models are based, and which discovered those new protein structures at DeepMind. Hundreds of Oxford alums are helping to build the most cutting-edge AI ventures, from OpenAI to Nvidia, as software engineers, AI researchers, marketers, strategists, and ethicists. They’re in leadership positions across all of these organisations, and have been founders of some of the most transformational firms.

We demonstrated in the pandemic that we could have global impact for the greatest possible good through collaboration between leading universities, major corporations and new ventures, backed by experienced investors. Work is now underway at Oxford on how to re-capture that collaboration to build the best possible AI tools, and on how to advance ground-breaking work on both fundamental and applied AI.

All of this gives real cause for hope. We all want to avoid a world where AI is controlled by just a handful of firms, working on a narrow set of problems. Work at Oxford and other leading institutions tells us that breakthrough developments in AI will be based not only on access to computing power, affordable for only a handful of tech giants – but on access to data.

Our greatest risk is not that our present prediction machines somehow become sentient and take over the world. Our greatest risk is that we fail to exploit the advantages we already have, across as broad and democratic a range of challenges as possible. If we want AI tools which have the most positive impact, we have to design them that way. If we want those tools to reflect our values and ethics, we have to be the ones building them.

Share this article

James Dancer (Keble, 1994) chairs the University’s Alumni Board, and is an investor and entrepreneur in artificial intelligence. His background is with Helsing, one of Europe’s leading AI ventures, in McKinsey & Company, and with climate investor, AiiM Partners. He is supporting new initiatives at Oxford to advance applied AI.

AI at Oxford

Experts at Oxford are developing fundamental AI tools, using AI to tackle global challenges, and addressing the ethical issues of new technologies.

AI is everywhere and everyone's talking about it. Throughout November and December 2023, join us as we explore what AI means and how it's impacting our society from world-leading experts, and discover the groundbreaking ways artificial intelligence is being applied at Oxford.

Find out more at https://www.ox.ac.uk/ai

Leading image: Getty Images. Inset portrait of James Dancer: James Dancer.